Let me start by saying that StorageCraft ShadowProtect is not in the slightest way at fault for the story I’m about to relay. The customer who should know better is entirely at fault. Let this be a warning to users of ShadowProtect, that there are things you do not understand and you should not mess with at all.

We’ve got a client at Correct Solutions who has a number of servers in a datacentre (DC). This is an installation that we setup earlier this year and we’ve got ShadowProtect doing the backups of the virtual servers running on Hyper-V to a local location at the DC. We are using Continuous Incrementals because they are well suited to allow us to replicate the small incremental files offsite to a DR location that they have. Continuous Incrementals are cool technology because they take one base file and then smaller incrementals after that point. ImageManager is then used to roll up those small incrementals into consolidated daily, weekly and monthly images. It’s a cool system really and one I’ve used for a number of years in many client environments.

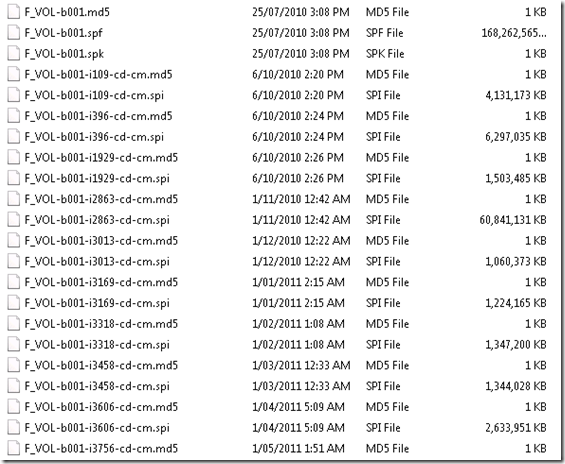

Back to this client, we have ImageManager at the DC that does the image consolidation and replication to the ShadowStream server at the remote DR location and it’s been working pretty well for a number of months. The files in the DC effectively are replicated to the remote DR site so that we can quickly bring things up should a major event happen. The client has their own IT Manager handling things on a day to day basis and so we’re called in to help when things are not working. Yesterday he called up – apparently the ShadowProtect backups are failing on the servers at the DC. We take a look at it and we find that it looks like a stack of files including the base images are missing from the DC location. We then check and find a similar (not the same) number of files are missing from the remote location. This is pretty strange as we only ever replicate the small incremental files from the DC to the DR location and if a file is deleted from the DC location it’s never automatically deleted from the DR location unless ImageManager tells it to be… in which case the files in the DC and DR locations would be identically deleted… which it was not. Ok – so that means someone or something else deleted the files… We did some further checking and could see that the client logged onto the DC servers AFTER the 9am backup and BEFORE the 10am backup… the 10am backup was the first one to fail. The client of course says he did not delete the files at all, but in passing mentions about cleaning old files… CLICK…. Yup – what he did was to look at the files in the backup folder. Below is a screenshot from one of my own servers that I’ve had using ShadowProtect since mid 2010.

You can see the file ending in SPF – it’s the first full backup of this volume – note it’s 168GB in size. The other SPI files are the incrementals or in this case the consolidated incrementals that are the rolled up incrementals for later backups. In order to restore any file from backup, you need the SPF and all the SPI files forward until today. Now – notice the time stamps on the files. The Base SPF file was created on 25th July 2010 – and has not been changed since then. The SPI files have been changed after that to incorporate the consolidation of the incrementals in accordance with the ImageManager plans that I configured. You see the –i109-cd-cm.spi file – it’s not been touched since 6th October 2010 – that’s again due to the consolidation and retention settings I’ve configured. This is all good and everything works fine.

Back to this customer… what I believe is that he saw that there were a heap of files not touched for some time and he deleted them all… ouch… that is how he broke ShadowProtect. To make it worse – he ALSO deleted them from the DR site. Naturally the client denies this entirely, but I’m pretty sure that this is what happened as ImageManager was NOT configured to delete things like the base files etc and I’ve used ImageManager enough to know it won’t mess up like that.

How do we fix it for this client? Well luckily the solution is simple… delete EVERYTHING and start again with new Base Images (SPF). Then allow those to replicate to the DR site and everything will be good. ImageManager needs to be enabled to replicate the base files, but this is a minor config change we can do. The faster way would be to grab a USB hard drive and copy the Base Images (over 300GB in this case) and then take that to the DR site to seed the DR server. We’re waiting on the clients decision for this.

Long story short – don’t mess with ShadowProtect SPF and SPI files unless you know EXACTLY what you are doing. This client has lost his entire backup history for the past 6 months as a result of what he did. ShadowProtect and ImageManager will handle things just fine for the most part if you leave them well enough alone. I hope this story can help others better understand WHY some files look like they’ve not been touched and better understand the relationship between the files.

seams like .SPF = Single Point Of Failure

Is Shadow protect doing the replication or DFS-R or R-Sync?

Tape or NAS replication to removeable media may still have a place in this setup

StorageCraft was not the issue here, the client was. We recommend that even in situations like this, that the client has an additional backup mechanism – this client decided not to. The client specifically overrode the checks n balances we and StorageCraft have in place to prevent issues like this… yes – I know – sometimes this is the type of client we’d like to shoot 🙂

additional (chargeable) offsite nightly replication to a site the client doesn’t have access to is the answer

Thanks to Wayne Small for this post. 2 Thumbs up!

Hi Wayne,

Are there any good resources for learning how to consolidate backups?

I am a first time user of ShadowProtect products and would like to limit the amount of storage required.

I do not have Image Manager (and would prefer not to use it).

Cheers

If you use Storagecraft SPX continuous incremental backup jobs, you must use ImageManager on your backup chains. Generally, the spf base images are ~60% size of the raw volume data you’re targeting.

You can set fairly aggressive backup retention settings and in the most recent incarnations, you can configure rolling monthly consolidations.

As a rule of thumb, if you had a daily data rate change of ~1-2 GB and if you were to have a retention set to 7 days of intra-daily, 28 days of daily, 70 of weekly and 12-months of monthly, you might need ~2x production storage space. So, with 1 TB of production data on a continuous incremental backup schedule, you’d need 2-2.5 TB of backup storage.

StorageCraft have some good kbs available for learning about their products.

https://support.storagecraft.com/s/article/consolidate-backup-images?language=en_US

Luke

Mitch – apologies for the delayed response. I’ve done a webinar in the past over on http://www.thirdtier.net on how this all works – I’ll find the link and link it here. You MUST use ImageManager if you wish to use continuous incrementals – that is the architecture of the product. It’s not hard to setup at all.

so … basically if we have the SPF file at 100GB … transfering over WAN will already be an issue… not to mention that over the years… the incremental backup files may grow up to 400GB ….

a single backup fail in DR may just need to resync total of 500GB over WAN…. wondering is there any other way to do this?

any one got idea for this? …. based on what wayne has mention… it seems the only way to resync all is to copy to external disk and bring it to DR… what if the cloud is in AWS or Azure? wont that be an issue ?

You’re not limited to having a single replication destination with ImageManager.

For example, if your Site A and Site B were using amply sized NAS devices primarily for storing backups, there’s nothing preventing you from having ‘seed’ USB disks also configured as secondary replications at each site, on a much shorter retention setting.

Then if your primary destination experienced an issue at Site A where the .spf was compromised or deleted, rather than starting a new backup chain, you could copy it back from the local, secondary destination USB, rather than over the WAN from the primary replication destination at Site B.

Image qp is useful for working out the minimum necessary files for a chain to continue.

https://support.storagecraft.com/s/article/How-To-Using-Image-QP-to-check-the-restore-chain?language=en_US

Unless you have a cloud provider accepting seed disks you can send there to upload contents to storage (or a datacentre you can physically access) I do see your point about that difficulty. You might want to consider the bandwidth you’d need in order to upload at certain benchmarks. Should you only use cloud storage as a tertiary destination if you have insufficient up/down bandwidth? Or, for example, is uploading 1 TB in 24 hours. acceptable?

Luke

Leezy,

Yes – seeding the base image via USB disk is the way to do it. We will often have the client seed to a USB, send us the disk and then use that to seed the base into our offsite DR environment. As Luke has mentioned above – StorageCraft do the same with their cloud DR product. Once it’s seeded, we return the USB to the client and configure replication jobs to keep the USB disk up to date with whatever is onsite, and a seperate job to send the incrementals only offsite. This works out really well as in case there is ever a major failure of the clients primary backup device, we can flick them over to backing up to the USB and keep the replication moving offsite (with some rejigging of the jobs) until we can get the primary repository repaired.